In this blog series I’m going to dissect cognitive biases and how they relate to information security. Bias is prevalent in any form of investigation, whether you’re threat hunting, reversing malware, responding to an incident, attributing network attacks, or reviewing an IDS alert. In each post, I’ll describe a specific type of bias and how it manifests in various information security specialties. But first, this post will explain some fundamentals about what bias is and why it can negatively influence investigations.

In this blog series I’m going to dissect cognitive biases and how they relate to information security. Bias is prevalent in any form of investigation, whether you’re threat hunting, reversing malware, responding to an incident, attributing network attacks, or reviewing an IDS alert. In each post, I’ll describe a specific type of bias and how it manifests in various information security specialties. But first, this post will explain some fundamentals about what bias is and why it can negatively influence investigations.

What is Bias?

Investigating security threats is a process that occurs within the confines of your mind and centers around bridging the gap between your limited perception and the reality of what has actually occurred. To investigate something is to embrace that perception and reality aren’t the same thing, but you would like for them to be. The mental process that occurs while trying to bridge that perception-reality gap is complex and depends almost entirely on your mindset.

A mindset is how you see and approach the world and is shaped by your genetics and your collective world experience. Everyone you’ve ever met, everything you’ve ever done, and every sense you’ve perceived molds your mindset. At a high level, a mindset is neither a good or bad thing, but it can lead to positive or negative results. This is where bias comes in.

Bias is a predisposition towards a certain way of thinking, and it can be the difference in a successful or failed investigation. In some ways, bias is good when it allows us to learn from our previous mistakes and create mental shortcuts to solving problems. In other ways its bad, and can lead us to waste time pursuing bad leads or jump to conclusions without adequate evidence. Let’s consider a non-technical example first.

The (very) Personal Effects of Bias

On a night like any other, I laid my head down on my pillow at about 11 PM. However, I was unexpectedly awoken around 2 AM with wrenching stomach pain unlike anything I’d ever felt before. I tossed and turned for an hour or so without relief, and finally woke my wife up. A medical professional herself, she realized this might not be a typical stomach ache and suggested we head to the emergency room.

About an hour later I was in the ER being seen by the doctor on shift that night. Based on the location of the pain, he indicated the issue was likely gal bladder related, and that I probably had one or more gall stones causing my discomfort. I was administered some pain medication and setup with an appointment with a primary care physician the next day for further evaluation.

The primary care physician also believed that a gal bladder was the likely cause of the previous night’s discomfort, so she scheduled me for an ultrasound the same day. I walked over to the ultrasound lab where they spent about twenty minutes trying to get good images of the area. Shortly thereafter, the ultrasound technician came in and looked at the image and shared his thoughts. He had identified my gal bladder and concluded that it was full of gal stones, which had caused my stomach pain. My next stop was a referral to a surgeon, where my appointment took no more than ten minutes as he quickly recommended I switch to a low fat diet for a few weeks until he could perform surgery to remove the malfunctioning organ.

Fast forward a couple of weeks later and I’m waking up from an early morning cholecystectomy operation. The doctor is there as soon as I wake up, which strikes me as odd even in my groggy state.

“Hey Chris, everything went fine, but…”

Let me take this opportunity to tell you that you never want to hear “but…” seconds after waking up from surgery.

“But, we couldn’t remove your gal bladder. It turns you don’t have one. It’s a really rare thing and less than .1% of the population is born without one, but you’re one of them.”

At first I didn’t believe him. As a matter of fact, I was convinced that my wife had put him up to it and they were messing with me while I wasn’t completely with it. It wasn’t until I got home that same day and woke up again several hours later that I grasped what had happened. I really wasn’t born with a gal bladder, and the surgery had been completely unnecessary.

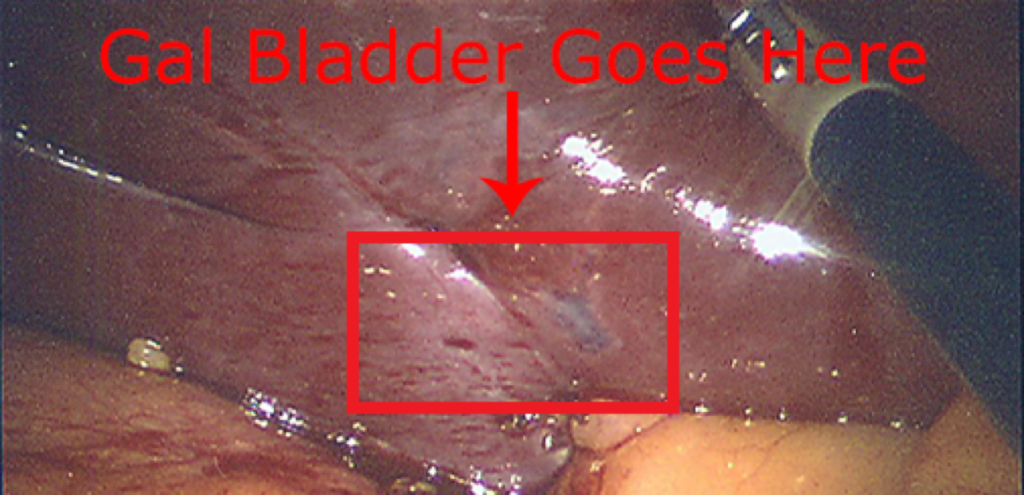

Figure 1: This is a picture of where my gal bladder should be

Dissecting the Situation

Let’s dissect what happened here.

- ER Doctor: Believed I was having gal bladder issues based on previous patients presenting with similar symptoms. Could not confirm this in the ER, so referred me to a primary care physician.

- Primary Care Doctor: Also believed the gal bladder issue was likely, but couldn’t confirm this in her office, so she referred me to a radiologist.

- Radiologist: Reviewed my scans to attempt to confirm the diagnosis of the previous two doctors. Found what appeared to be confirming evidence and concluded my gal bladder was malfunctioning.

- Surgeon: Agreed with the conclusion of the radiologist (without looking at the source data himself) and proceeded to recommend surgery, which I went through.

So where and why did things go wrong?

For the most part, the first two doctors were doing everything within their power to diagnose me. The appropriate steps of a differential diagnosis instruct physicians to identify the most likely affliction and perform the test that can rule that out. In this case, that’s the ultrasound that was performed by the radiologist, which is where things started to go awry.

The first two doctors presented a case for a specific diagnosis, and the radiologist was predisposed towards confirming this bias before even looking at the scans. It’s a classic case of finding something weird when you go looking for it, because everything looks a little weird when you want to find something. In truth, the radiologist wasn’t confident in his finding, but he let the weight of the other two doctor’s opinions bear down on him such that he was actually seeking to confirm their diagnosis more than trying to independently come to an accurate conclusion. This is an example of confirmation bias, which his perhaps the most common type of bias encountered. It also represents characteristics of belief bias and anchoring.

The issue here was compounded when I met the surgeon. Rather than critically assessing the collective findings of all the professionals that were involved to this point, he assumed a positive diagnosis and merely glanced at the ultrasound results in passing. All of the same biases are repeated here again.

In my case bias led to an incorrect conclusion, but understand that even if my gal bladder had been the issue and the surgery went as expected, the bias was still there. In many (and sometimes most) cases, bias might exist and you will still reach the correct conclusion. That doesn’t mean that the same bias won’t cause you to stumble later on.

Consequences of Bias

In my story, you see that bias resulted in some fairly serious consequences. Surgery is a serious matter, and I could have suffered some type of complication while on the table, or a post op infection that could have resulted in extreme sickness or death.

In any field, bias influences conclusions and those conclusions have consequences when they result in action being taken. In my case, it was a few missed days of work and a somewhat painful recovery. In security investigations, bad decisions can result in wasted time pursuing leads or missing something important. The latter could have drastic effects if you work for a government, military, ICS environment, or any other industry where lives depend on system integrity and uptime. Even if normal commercial environments, bad decisions resulting from the influence of bias can lead to millions of lost dollars.

Let’s examine one other effect of this scenario. I’m a lot less likely to trust the conclusions of doctors now, and I’m a lot less likely to agree to surgery without a plethora of supporting evidence indicating the need for it. These things in themselves are a form of bias. This is because bias breeds additional bias. We saw this with the relationship between the radiologist and the surgeon as well.

The effects of bias are highly situational. It’s best not to think of bias as something that dramatically changes your entire outlook on a situation. Think of bias like a pair of tinted glass that subtly influences how you perceive certain scenarios. When the right bias meets the right set of circumstances, things can go bad quickly.

Countering Bias

Bias is insanely hard to detect because of how it develops in either an individual or group setting.

In an individual setting, bias is inherent to your own decision making. Since humans inherently stink at detecting their own bias, it is unlikely you will become aware of it unless your analysis is reviewed by someone else who points it out. Even then, bias usually exists in small amounts and is unlikely to be noticed unless it meets a certain threshold that is noticeable by the reviewer.

In a group setting, things get complicated because bias enters from multiple people in small amounts. This creates a snowball effect in which group bias exists outside the context of any specific individual. Therefore, if hand offs occur in a linear manner such that each person only interacts one degree downstream or upstream, it is only possible to detect overwhelming bias at the upstream levels. Unfortunately, in real life these upstream levels are usually where the people are less capable of detecting the bias because they have lesser subject matter expertise in the field. In security, think managers and executives trying to catch this bias instead of analysts.

Let me be clear – you can’t fully eliminate bias in an investigation or in most other walks of your life. The way humans are programmed and the way our mindsets work prohibit that, and honestly, you wouldn’t want to eliminate all bias because it can be useful at times too. However, you can minimize the effects of negative bias in a couple of ways.

Evidence-Based Conclusions

A conclusion without supporting evidence is simply a hypothesis or a belief. In both medicine and information security, belief isn’t good enough without data to support it. This is challenging in many environments because visibility isn’t always in all the places we need it, and retention might not be long enough to gather the information you need when an event occurred far enough in the past. Use these instances to drive your collection strategy and justify appropriate budget for making sound conclusions.

In my case, two doctors made hypotheses without evidence. Another doctor gathered weak evidence and made a bad decisions, and another doctor confirmed that bad decision because the evidence was presented as a certainty when it actually wasn’t. A review of the support facts would have led the surgeon to catch the error before deciding to operate.

Peer Review

By definition, you aren’t aggressively aware of your own bias. Your mindset works the way it works and that is “normal” to you, so your ability to spot biased decisions when you make them is limited. After all, nobody comes to a conclusion they know to be incorrect. As Sheldon from the Big Bang Theory would say, “If I was wrong, don’t you think I’d know it?”

This is where peers come in. The surgeon might have caught the radiologist’s error if he had thoroughly reviewed the ultrasound results. Furthermore, if there was a peer review system in place in the radiology department, another person might have caught the error before it even got that far. Other people are going to be better at identifying your biases than you are, so that is an opportunity to embrace your peers and pull them in to review your conclusions.

Knowledge of Bias

What little hope you have of identifying your own biases doesn’t manifest during the decision-making process, but instead during the review of your final products and conclusions. When you write reports or make recommendations, be sure to identify the assumptions you’ve made. Then, you can review your conclusions as weighed against supporting evidence and assumptions to look for places bias might creep in. This requires a knowledge of common types of bias and how they manifest, which is precisely the purpose of this series of blog posts.

Most physicians are trained to understand and recognize certain types of bias, but that simply failed in my case until after the major mistakes had been made.

Conclusion

The investigation process provides an opportunity for bias to affect conclusions, decisions, and outcomes. In this post I described a non-technical example of how bias can creep in while attempting to bridge the gap from perception to reality. In the rest of this series I’ll focus on specific types of bias and provide technical and non-technical examples of the bias in action, along with strategies for recognizing the bias in yourself and others.

As it turns out my stomach aches eventually stopped on their own. I never spoke to the radiologist again, but I did speak to the surgeon at length and he readily admitted his mistake and felt horrible for it. Some people might be furious at this outcome, but in truth, I empathized with his plight. Complex investigations, be it medical or technical, present opportunity for bias and we all fall victim to it from time to time. We’re all only human.

—

If you’re interested in learning more about how to help diminish the effects of bias in an investigation, take a look at my Investigation Theory course where I’ve dedicated an entire module to it. This class is only taught periodically, and registration is limited.