In the first part of this series I told a personal story to illustrated the components and effects of bias in a non-technical setting. In each post following I’ll examine a specific type of bias, how it manifests in a non-technical example, and provide real-world examples where I’ve seen this bias negatively affect a security practitioner. In this post, I’ll discuss anchoring.

In the first part of this series I told a personal story to illustrated the components and effects of bias in a non-technical setting. In each post following I’ll examine a specific type of bias, how it manifests in a non-technical example, and provide real-world examples where I’ve seen this bias negatively affect a security practitioner. In this post, I’ll discuss anchoring.

Anchoring occurs when a person tends to rely too heavily on a single piece of information when making decisions, most often based on information received early in the decision-making process.

Anchoring Outside of Security

Think about the average price of a Ford car. Is it higher or lower than 80,000? This number is clearly too high, so you’d say lower. Now let’s flip this a bit. I want you to think about the average price of a Ford car again. Is it higher or lower than 10,000? Once again, the obvious answer is that it’s higher.

These questions may seem obvious and innocent, but here’s the thing. Let’s say that I ask one group of people the first question, and a separate group of people the second question. After that, I ask both groups to name what they think the average price of a Ford car is. The result is that the first group presented with the 80,000 number would pick a price much higher than the second group presented with the 10,000 number. This has been tested in multiple studies with several variants of this scenario [1].

In this scenario, people are subconsciously fixating on the number they are presented and it is subtly influencing their short term mindset. You might be able to think of a few cases in sales where this is used to influence consumers. Sticking with our car theme, if you’ve been on a car lot you know that every car has a price that is typically higher than what you pay. By pricing cars higher initially, consumers anchor to that price. Therefore, when you negotiate a couple thousand dollars off, it feels like you’re getting a great deal! In truth, you’re paying what the dealership expected, you just perceive the deal because of the anchoring effect.

They key takeaway from these examples is that anchoring to a specific piece of information is not inherently bad. However, making judgements in relation to an anchored data point where too much weight is applied can negatively effect your realistic perception of a scenario. In the first example, this led you to believe the average price of a car is higher or lower than it really is. In the second example, this led you to believe you were getting a better deal than you truly were.

Anchoring in Security

Anchoring happens based on the premise that mindsets are quick to form but resistant to change. We quickly process data to make an initial assessment, but our ability to hone that assessment is generally weighed in relation to the initial assessment. Can you think of various points in a security practitioner’s day where there is an opportunity for an initial perception into a problem scenario? This is where we can find opportunities for anchoring to have occurred.

List of Alerts

Let’s consider a scenario where you have a large number of alerts in a queue that you have to work through. This is a common scenario for many analysts, and if you work in a SOC that isn’t open 24×7 then you probably walk in each morning to something similar. Consider this list of the top 5 alerts in a SOC over a twelve hour period:

- 41 ET CURRENT_EVENTS Neutrino Exploit Kit Redirector To Landing Page

- 14 ET CURRENT_EVENTS Evil Redirector Leading to EK Apr 27 2016

- 9 ET TROJAN Generic gate[.].php GET with minimal headers

- 2 ET TROJAN Generic -POST To gate.php w/Extended ASCII Characters (Likely Zeus Derivative)

- 2 ET INFO SUSPICIOUS Zeus Java request to UNI.ME Domain

Which alert should be examined first? I polled this question and found that a significant number of inexperienced analysts chose the one at the top of the list. When asked why, most said because of the frequency alone. By making this choice, the analyst assumes that each of these alerts are weighted equally. By occurring more times, the rule at the top of the list represents a greater risk. Is this a good judgement?

In reality, the assumption that each rule should be weighted the same is unfounded. There are a couple of ways to evaluate this list.

Using a threat-centric approach, not only does each rule represent a unique threat that should be considered uniquely, some of these alerts gain more context in the presence of others. For example, the two unique Zeus signatures alerting together could pose some greater significance. In this case, the Neutrino alert might represent a greater significance if it was paired with an alert representing the download of an exploit or communication with another Neutrino EK related page. Merely hitting a redirect to a landing page doesn’t indicate a successful infection, and is a fairly common event.

You could also evaluate this list with a risk-centric approach, but more information is required. Primarily, you would be concerned with the affected hosts for each alert. If you know where your sensitive devices are on the network, you would evaluate the alerts based on which ones are more likely to impact business operations.

This example illustrates the how casual decisions can come with implied assumptions. Those assumptions can be unintentional, but they can still lead you down the wrong path. In this case, the analyst might spend a lot of time pursuing alerts that aren’t very sensitive while delaying the investigation of something that represents a greater risk to the business. This happens because it is easy to look at a statistic like this and anchor to a singular facet of the stat without fully considering the implied assumptions. Statistics are useful for summarizing data, but they can hide important context that will keep you from making uninformed decisions that are the result of an anchoring bias.

Visualizations without Appropriate Context

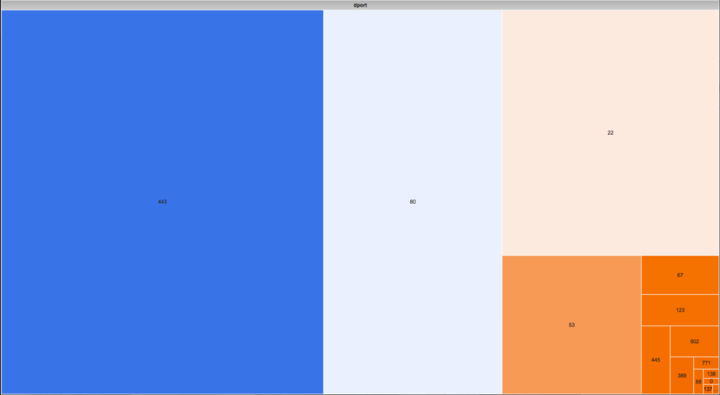

As another example, understand that numbers and visual representations of them have a strong ability to influence an investigation. Consider a chart like the one in the figure below.

This is a treemap visualization used to show the relative volume of communication based on network ports for a single system. The larger the block, the more communication occurred to that port. Looking at this chart, what is the role of the system whose network communication is represented here? Many analysts I polled decided it was a web server because of the large amount of port 443 and 80 traffic. These ports are commonly used by web servers to receive requests.

This is where we enter the danger zone. An analyst isn’t making a mistake by looking at this and considering that the represented host might be a web server. The mistake occurs when the analyst fully accepts this system as a web server and proceeds in an investigation under that assumption. Given this information alone, do you know for sure this is a web server? Absolutely not.

First, I never specified whether this treemap exclusively represents inbound traffic, and it’s every bit as likely that it represents outbound communication that could just be normal web browsing. Beyond that, this chart only represents a finite time period and might not truly represent reality. Lastly, just because a system is receiving web requests doesn’t necessarily mean its primary role is that of a web server. It might simply have a web interface for managing some other service that is its primary role.

The only way to truly ascertain whether this system is a web server is to probe it to see if there is a listening service on a web server port or to retrieve a list of processes to see if a web server application is running.

There is nothing wrong with using charts like this to help characterize hosts in an investigation. This treemap isn’t an inherently bad visualization and it can be quite useful in the right context. However, it can lead to investigations that are influenced by unnecessarily anchored data points. Once again, we have an input that leads to an assumption. This is where it’s important to verify assumptions when possible, and at minimum identify your assumptions in your reporting. If the investigation you are working on does end up utilizing a finding based on this chart and the assumption that it represents a web server, call that out specifically so that it can be weighed appropriately.

Diminishing Anchoring Bias

The stories above illustrate common places anchoring can enter the equation during your investigations. Throughout the next week, try to look for places in your daily routine where you form an initial perception and there is an opportunity for anchoring bias to creep in. I think you’ll be surprised at how many you come across.

Here are a few ways you can recognize when anchoring bias might be affecting you or your peers and strategies for diminishing its effects:

Consider what data represents, and not just it’s face value. Most data in security investigations represents something else that it is abstracted from. An IP address is abstracted from a physical host, a username is abstracted from a physical user, a file hash is abstracted from an actual file, and so on.

Limit the value of summary data. A summary is meant to be just that, the critical information you need to quickly triage data to determine its priority or make a quick (but accurate) judgement of the underlying events disposition. If you carry forward input from summary data into a deeper investigation, make sure you fully identify and verify your assumptions.

Don’t let your first impression be your only impression. Rarely is the initial insertion point into an investigation the most important evidence you’ll collect. Allow the strength of conclusions to be based on your evidence collected throughout, not just what you gathered at the onset. This is a hard thing to overcome, as your mind wants to anchor to your first impression, but you have to try and overcome that and try to examine cases holistically.

An alert is not an answer, it’s merely a question. Your job is to prove or disprove the alert, and until you’ve done one of those things the alert is not representative of a certainty. Start looking at alerts as the impetus for asking questions that will drive your investigation.

—

If you’re interested in learning more about how to help diminish the effects of bias in an investigation, take a look at my Investigation Theory course where I’ve dedicated an entire module to it. This class is only taught periodically, and registration is limited.

[1] Strack, F., & Mussweiler, T. (1997). Explaining the enigmatic anchoring effect: Mechanisms of selective accessibility. Journal of personality and social psychology, 73(3), 437.