As an analyst acquires experience investigating threats they will naturally gain mastery of evidence. This includes the ability to collect it, comfort parsing it with multiple tools, and an understanding of which sources can answer specific questions. A true expert knows which evidence to turn to at any given point and is comfortable using it to make decisions. While there are numerous resources for learning about evidence sources, one topic that I haven’t seen discussed enough publicly is the intent of evidence relative to its creation. In this article, I’ll describe what evidence intent means and how expert investigators leverage this concept when making decisions.

Intentional Evidence

The goal of any investigation is to build a timeline of events, and evidence bolsters every event that is considered to be fact. Any conclusion without evidence is merely opinion or educated guess.

For the analyst, every bit of evidence that’s used during an investigation exists because someone wanted it to be there. That someone clearly isn’t the miscreant responsible for the attack, it’s normally the developer who wrote the software responsible for creating it. Sometimes, the developer specifically wants to create evidence knowing that it will be used by a human to determine if a specific event occurred. The developer puts thought into the exact conditions for which the evidence will be created, where it will be stored, how it will be accessed, the format it will be displayed in, how it can be exported, and a number of other factors that make it easier for the human analyst to review that evidence.

Intentional evidence exists with the explicit purpose of attesting to an event that occurred.

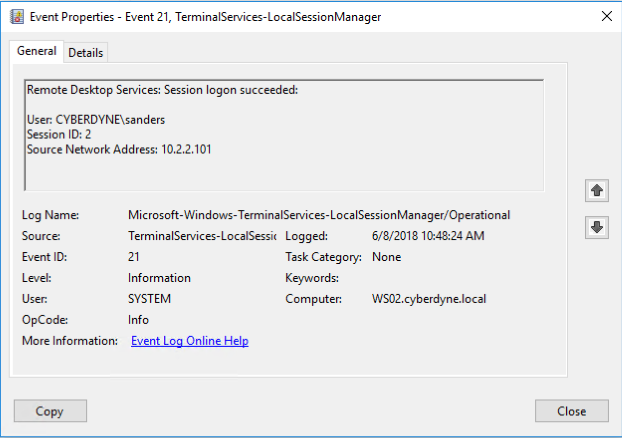

The most common example of intentional evidence are the logs generated by the operating system. They are very thoroughly documented with each field containing a descriptive name and plain text value. In operating systems like Windows, they are accessed with a specific Event Viewer tool complete with several features to search and filter the logs. The field values are consistent to aid with pulling logs into SIEM or log management tools for bulk searching, aggregations, and longer-term trending. Simply put, intentional evidence is usually created with human analysis at top of mind.

Figure 1: Windows Event Logs are intended to be evidence

Unintentional Evidence

Let’s pretend you’re a developer and you’ve created a search engine. You start to notice that users of the search engine often query the same thing multiple times. Because you love your users, you realize that you can save their search history so that when they start typing, the search box will auto-complete the term. That is, by simply type “ba” the search engine will auto-complete “basketball”. To accomplish this feat, you’ll store a copy of each users search history on their workstation. So the temporary file doesn’t grow forever, you’ll timestamp each entry and purge the entries over a month old.

This thought pattern is very straightforward to a developer and helps serve a specific purpose by enhancing the feature set of the search engine. This is very intentional. However, this feature also creates a forensic evidence source with timestamped entries for every search a user has completed. This is unintentional but beneficial to security analysts. The developer didn’t do anything extra to help the security analyst. The usefulness of this artifact is merely a byproduct of the other development.

Unintentional evidence is evidence that was created as a byproduct of some other non-attestation function.

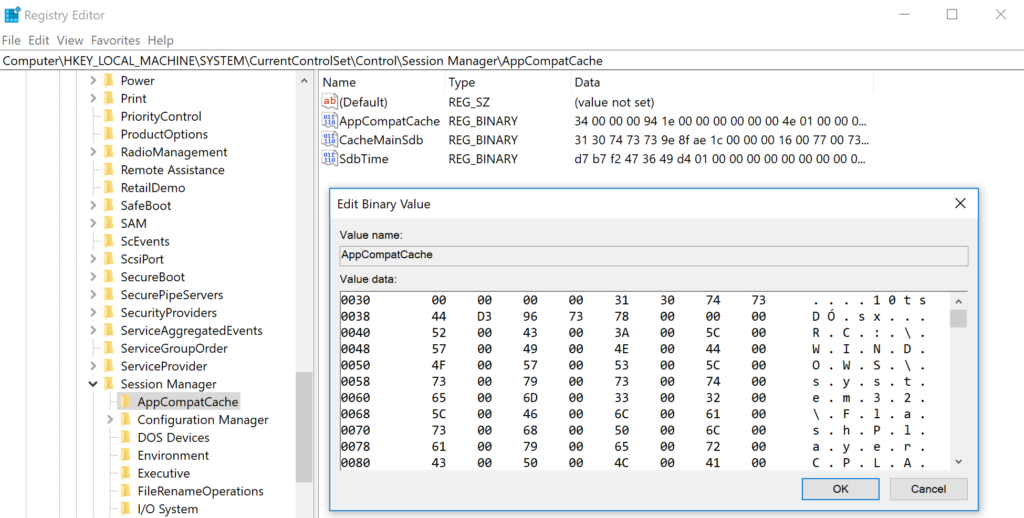

This type of scenario is more common than you think, and several of the forensic evidence sources analysts rely on frequently came from similar features. Take for example the Microsoft Application Compatibility Cache, also known as Shim Cache. Windows using this feature to lookup modules and determine if shimming is required for an application to execute, allowing for compatibility between legacy applications and modern operating systems. For that function to work correctly, the operating system creates a log of executed files to examine for compatibility needs. In the hands of a forensic analyst, that log can prove application execution (both for normal and malicious binaries)1.

Figure 2: The Shim Cache has another primary function, but can serve as evidence.

Unintentional evidence sources are numerous and often results from features intended to make life easier on the user or speed up execution by caching. The intent of evidence primarily applies to evidence that already exists and is merely retrieved (like various OS and file system artifacts). This would not necessarily apply to all evidence. For example, network traffic is neither intentional evidence or the byproduct of a specific feature. It isn’t the byproduct of a function, it is the totality of the function. Ultimately, it is likely more appropriate to think of evidence intent measured as non-binary with evidence simply being more or less intentional.

How Evidence Intent Affects Analysis

Expert analysts already understand the role of evidence intent even if they’ve never quite thought of it that way. Intent affects how the analyst approaches the evidence, how much they trust it, and their expectation when deciding whether to pursue it for answers. The cream rises to the top with evidence, and the nuance of intentional vs. unintentional evidence impacts that.

Unintentonal evidence generally has the following characteristics:

It often has several quirks or exceptions. ShimCache entries are only written on system reboot1. The UserAssist registry key (also used for tracking user execution) is ROT13 encoded2. The last printed time in an MS Word document isn’t updated if the user doesn’t save the document afterward. These aren’t things you would expect of something that is meant to serve an evidentiary purpose. They aren’t obvious, and in some cases, they aren’t always documented and you have to find them out the hard way.

It requires more parsing effort. The evidence was potentially never meant to be pulled into a SIEM or batch analyzed, so it might not be in a structured format (JSON, etc) or it may be much more complex than it should be (lots of nested XML). The data might also be encoded or surrounded by other less useful data, making it hard to get to and extract in a way that’s useful.

It is often poorly documented. Nobody on the development side necessarily expects people to use these artifacts, so they don’t bother to document them. As a result, some of them aren’t found until many years later by forensic analysts and are only loosely documented. This makes it difficult to understand the nuance of what you’re looking at.

It may have multiple names. In our earlier example, I mentioned that what Microsoft calls the Application Compatibility Cache is better known as ShimCache in forensic circles. This name divergence can confuse new analyst and make it more difficult to search for information about the source.

It may not be as reliable. Without consideration as evidence, retention isn’t normally a concern. This is common with volatile evidence sources like system memory, prefetch, or a page file. When you go to look for things in these evidence sources, you might not find them.

It may cease to exist at any point. As goes the primary purpose of evidence, so goes that evidence. That could mean the evidence disappears when the primary function is no longer needed. This occurs when software vendors phase out features. It also occurs when legal barriers pop up, like the new GDPR law that is limiting the value of WHOIS data that analysts often use to research malicious domains3.

If you’ve been slinging logs for years, then you probably already weigh these things into your decision-making during the analysis process. You are probably less likely to ask investigative questions that require the use of unintentional evidence. You are also probably less likely to trust conclusions derived from unintentional evidence. These are things I’ve seen in my research on the investigation process, and are more profound in less experienced practitioners. Furthermore, it is more difficult for newer analysts to learn the value of unintentional evidence compared to intentional evidence.

Conclusion

Evidence is nuanced and complex. In this article, I’ve highlighted one facet of that nuance: whether the data is intended to be used as evidence, or if that is merely an unintentional goal it serves. This is an important consideration, particularly for new analysts, because unintentional data comes with baggage that may not exist elsewhere. As you go forward in your investigations I challenge you to consider which evidence sources you query regularly that might be unintentional evidence. Does it change how you interpret that data? Do any biases (good or bad) exist inherent to that data source? Have you shied away from the data source for any of the reasons I mentioned? These are important questions as you continue to evolve your mastery of evidence sources and the investigation process.

References:

[1] https://www.fireeye.com/content/dam/fireeye-www/services/freeware/shimcache-whitepaper.pdf

[2] https://www.magnetforensics.com/artifact-profiles/artifact-profile-userassist/

[3] https://krebsonsecurity.com/2018/02/new-eu-privacy-law-may-weaken-security/