I’ve argued for some time that information security is in a growing state of cognitive crisis. Even with a deluge of freely available information, we do a poor job identifying and teaching the skills necessary to be a competent practitioner across the variety of available specialties. Most new professionals must rely too heavily on direct observation and self-study while universities are broadly failing to produce job-ready graduates.

We’re not the only profession to weather this storm. Medicine, law, accounting, and other well-established fields have been here. Learning from them, we can identify the signs that define a cognitive crisis:

Demand for expertise greatly outweighs supply. There are tremendous numbers of jobs unfilled, mostly for experienced professionals. Because so many organizations need experience, they are unable to appropriately invest in entry-level jobs and devote the necessary time for internal training. Scarcity creates significantly higher salaries for practitioners with timely skills, and hyper-specialization occurs.

Most information cannot be trusted or validated. There are few authoritative sources of knowledge about critical components and procedures. Practitioners rely heavily on first-hand experience and third-party observations that haven’t been peer-reviewed or externally validated. This makes it hard to learn new information reliably, and even harder to develop best practices and measure success.

Large systemic issues persist with no ability to tackle them in a large, mobilized, or strategic manner. The industry is unable to organize or widely combat the biggest issues they face. This might be plagues and public health crises for medicine or wide-scale unchecked embezzlement resulting from “cooked books” for accounting. For information security, worms from the 2000s are still rampant, there are still hundreds of thousands of hosts on the internet exposing continually vulnerable SMB ports, and the spread of ransomware shows no sign of ever slowing. Many of the biggest problems we faced twenty years ago still exist or have gotten worse.

Because other well-established fields have been in cognitive crisis and come out the other side more formalized and effective, there is hope for information security as well. We can learn from these other fields and have our own cognitive revolution. There are three things that must happen:

- We must thoroughly understand the processes used to draw conclusions.

- Experts must develop repeatable, teachable methods and techniques.

- Educators must build and advocate pedagogy that teaches practitioners how to think.

At the center of all three pillars of the cognitive revolution is the concept of a mental model.

Mental Models

A mental model is simply a way to view the world. We are surrounded by complex systems, so we create models to simplify things.

You use mental models all the time. Here are a few examples:

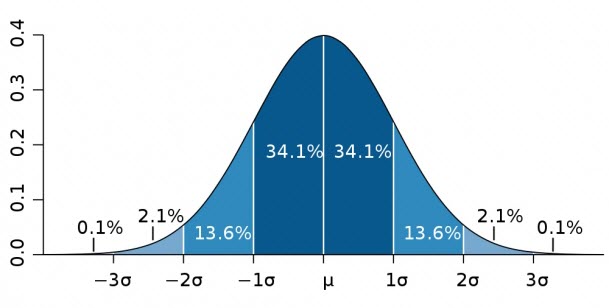

Distribution and the Bell Curve. In normally distributed data, most data points cluster around the middle. So most people are of average intelligence whereas very few are extremely low or high intelligence.

Operant Conditioning. In many cases, the nature of a response dictates how likely animals are to exhibit a stimulus. If a mouse gets food whenever it presses a lever, it is more likely to press the lever. If you get sick every time you eat pineapple, you aren’t likely to eat pineapple very often.

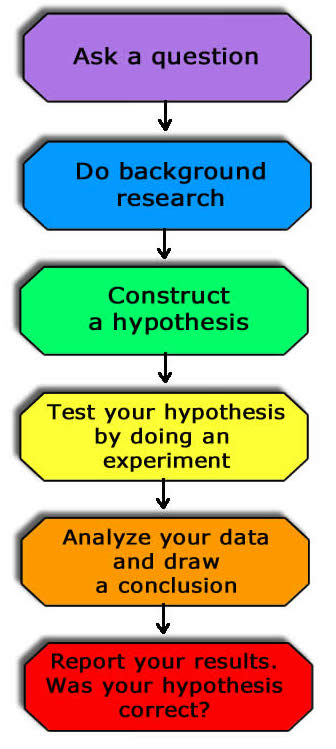

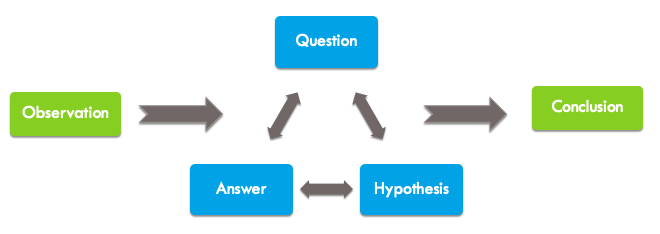

The Scientific Method. Scientific discovery generally follows this process: ask a question, form a hypothesis, conduct an experiment to test that hypothesis, and report the findings. We use this not only for individual research but also to validate the research of others by evaluating the congruence between key questions, hypotheses, experimental procedures, and conclusions.

Each one of these models simplifies something complex in a way that is both useful for practitioners and educators. They are mindsets used to interpret problems and make decisions. In another sense, models are tools used to solve problems. The more tools in your toolbox, the better equipped you are to tackle an array of problems.

Applied Models

A professional field relies on mental models to simplify complex processes for education and practice. These are specialty tools, applicable to an individual profession. Medicine has done a great job of developing mental models over the past hundred years as they went through their cognitive revolution. Even with 60,000 possible diagnoses and 4000 procedures, medical professionals create models like:

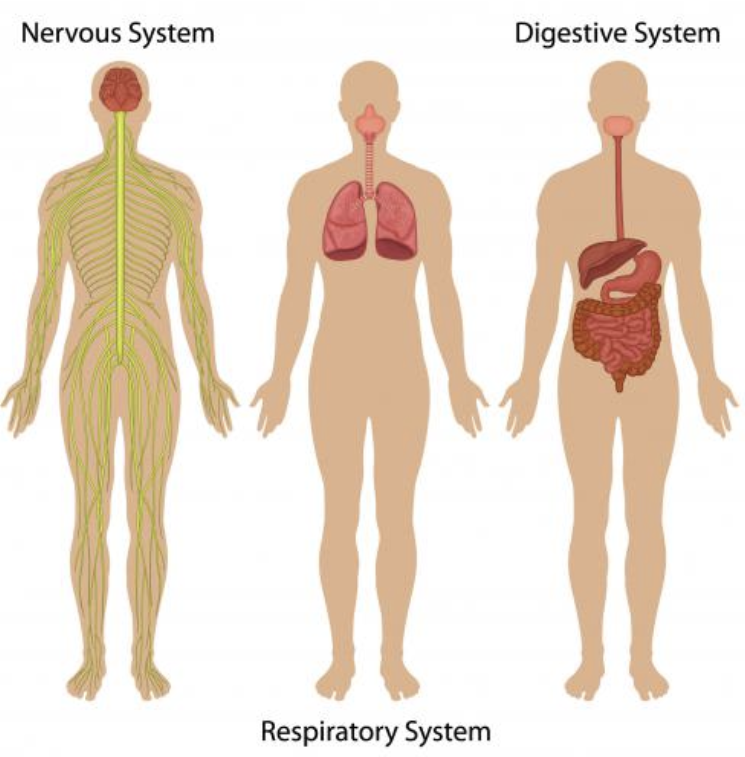

13 Organ Systems. The body has a lot of components, but they can be organized into discrete, mostly self-contained systems. Specialists usually focus on one of these systems so they can limit their deep learning to that system and its “handoffs” with other systems. This makes the scope of learning more manageable.

4 Vital Signs. The entropy of the human body is enormous. There are four primary vital signs that are used to detect meaningful changes: temperature, respiration rate, blood pressure, and pulse. Medical professionals at all levels are adept at collecting this information on an ongoing basis as a constant means of preliminary diagnostic assessment.

10 Point Pain Scale. Pain is an important diagnostic measurement because it can provide insight into treatment effectiveness. But, it’s difficult to objectively measure pain. While simple, the graphical pain scale helps doctors communicate effectively with patients to detect the relative change in pain by establishing a baseline.

Computing also has mental models you’ve relied on heavily, even though you might not have called them that. For example,

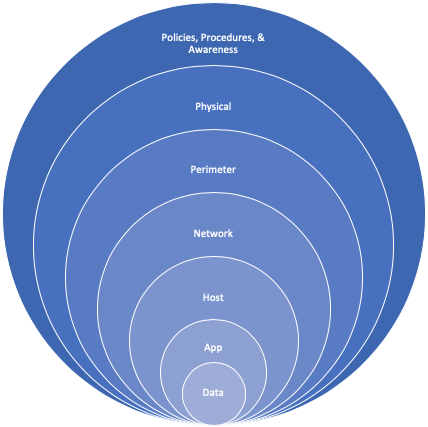

Defense in Depth. An approach to security architecture where complementary categories of security controls (detective, protective, reactive, etc) are layered to provide a comprehensive security posture.

The OSI Model. A hierarchical classification of networking communication functions used to design and analyze communication protocols and their interactions. OS and application developers use this to architect functionality and boundaries. Administrators and analysts use this to troubleshoot and investigate network related problems and incidents.

The Investigation Process. Investigating security threats generally follows this process: receive a suspicious input, ask investigative questions, form a hypothesis, seek answers, and repeat until reaching a conclusion. Security analysts use this process to build event timelines and decide which evidence to analyze.

You’re constantly using all sorts of mental models as you go about your day, but they don’t exist in a vacuum. We each experience the world through our own unique lens that is the product of biology and our lived experiences. While our perspective constantly changes, it is not based on the rapid change between individual lenses that we use one at a time. Instead, our lens consists of a latticework of many models layered together.

Sometimes the models that form our lens are complimentary. For example, Occam’s Razor is a model we rely on that suggests the simplest explanation is usually correct. A doctor might utilize this principle along with mental models for deductive reasoning and evaluation of mental state to determine that someone who is losing weight rapidly is simply not eating enough, perhaps due to depression. That sounds simple in a sentence or two, but to get there requires immense thoughtfulness and awareness.

Other times we reason through competing models that pull against each other. This often occurs when larger constructs introduce mental models that are at odds with each other, like religion and government citizenship. Christianity dictates “thou shalt not kill”, but democracy dictates that citizens must go to war to preserve their freedoms. These conflicts create internal strife where compromises are often made.

Every time we learn a new way to structure our thinking, we incorporate new mental models into the latticework that is our lens. Everything we think about or act upon filters through this latticework and the models are constantly pushing or pulling at one another.

Model Desperation

Information security practitioners desperately crave new models, further highlighting the cognitive crisis. In fact, we often take good models and overuse them or extend them beyond their practical purpose.

The MITRE ATT&CK matrix is a framework of adversarial tactics that basically presents a categorical list of common techniques to describe computer network attacks. It’s a great model that’s useful in a variety of ways, and honestly, we’ve needed something like this for a while.

However, because we’re so model-hungry I’ve started to run across organizations who’ve abandoned other sound security principles and successful ongoing initiatives in pursuit of “checking things off the list” that is ATT&CK. Similarly, I’ve seen new security organizations center their entire detection and prevention strategies around ATT&CK without first defining their threat model, understanding the high-value assets, and gaining any sense of the risk they want to mitigate. That’s a recipe for failure. These things aren’t MITRE’s fault, as they’ve even gone so far as to promote presentations that actively discuss how ATT&CK should and should not be used, highlighting the framework’s limitations.

The problem is that we’re model hungry and we’ll rapidly use and abuse any reasonable model that presents itself. Ultimately, we want good models because we want a robust toolbox. But, not everything is a job for a hammer and we don’t need fourteen circular saws.

What Makes a Good Model?

I’ve already mentioned a few models used in computing that help simplify education and practice, but we need more that are useful and widely accepted. What makes a mental model good?

A good model is simple. Models exist to overcome the complex. If the model is more complex or nuanced than the thing it is helping makes sense of, it becomes less useful. The way someone expresses the model matters significantly as well. A 20-pager that describes a model’s purpose and application is useful, but a simple graphic that conveys its overall use will help significantly for adoption and acceptance. You can represent a model with a graphic, a table, or even a simple set of categories.

A good model is useful. The model must be broad enough that it applies to enough people and situations to be known, but it must be specific enough that individuals can use it to practically gain understanding in specific scenarios. Think of a model as an on-ramp to the highway. The entrance must be easy to find and approach at slow speed. Once there, it should provide a mechanism for getting up to speed quickly so you can move at a favorable pace. Great models connect people from their existing knowledge to complex concepts.

A good model is imperfect. Most models are generalizations created through the process of inductive reasoning. There are always edge cases, and these exceptions to the rule are important because they provide a mechanism for falsifying a model. A key to understanding a theory or system is the ability to know when it does not work. Because models are imperfect, they cannot be applied to every situation. A model should have clear criteria for its use so that it isn’t over-applied in situations that are not appropriate.

Creating Models

Most models are created through inductive reasoning. Inductive reasoning is the process of forming generalizations based on lived experience, observations, or collected data. If you investigate several cases that involve the malicious use of obfuscated PowerShell scripts, you may start to generalize that obfuscated PS scripts are likely to be malicious. This concept doesn’t represent a model, but it does represent a heuristic (a rule of thumb) that can be useful in an investigation. It could be combined with several other heuristics and organized into a model for investigations, evidence, or something else.

Inductive reasoning is only as strong as the number and diversity of the observations the conclusions are based on. I once worked with an analyst who saw the Nginx web server used as part of attacker infrastructure a couple of times. They had never seen this web server used legitimately before, so they inductively reasoned that Nginx was generally used by bad actors. Of course, Nginx is used by all sorts of legitimate entities, so the inductive heuristic wasn’t based on an appropriate sample and it led this person to poor conclusions and wasted time down the road.

When creating a model, the standard of evidence is even higher. The model must be based on a tremendous number of observations across a wide array of dimensions.

I find that most models begin by asking the right sort of question. For example, is a hot dog a sandwich?

That isn’t the right question. But, it can lead to the right question. Regardless of your opinion, even a brief discussion will eventually lead you to ask, “What defines a sandwich?”

That is the right question. To answer it you’ll probably enumerate the properties of a sandwich and the relationships between those properties.

A sandwich has multiple layers, the outer layer is usually carb-based, etc…

You’ll refine those properties by highlighting clear examples of sandwiches and non-sandwiches, while also having nuanced discussions of edge cases.

An Italian hero is clearly a sandwich. A pizza clearly isn’t. What’s the difference? How can that be applied to a hot dog?

These discussions should be rich and diverse to gain a unique perspective. This will bring about more edge cases and identify cultural differences you won’t have thought of.

What about a taco? That’s one culture’s form of a sandwich. How does that apply?

The discussion creates value. Those who ask and answer the right questions are those who have done the legwork to arrive at a useful model.

By the way, the cube rule provides one take on whether a hot dog is a sandwich. Just don’t shoot the messenger.

Conclusion

Mental models help us make better decisions and learn faster. Models are tools that help us simplify complexity, and they are critical in the practice of any profession. For information security to evolve past our cognitive crisis we must become more adept at developing, utilizing, and teaching good models. If you’d like to learn about more information security specific models, I’ve included some in the references list below.

References

- Simon, H. A. (1957). Models of man; social and rational.

- Page, S. (2018). The Model Thinker: what you need to know to make data work for you, Basic Books, NY

- The Bell Curve: https://www.mathsisfun.com/data/standard-normal-distribution.html

- Operant Conditioning: https://www.simplypsychology.org/operant-conditioning.html

- The Scientific Method: https://sciencebob.com/science-fair-ideas/the-scientific-method/

- The OSI Model: https://www.cloudflare.com/learning/ddos/glossary/open-systems-interconnection-model-osi/

- Organ Systems: https://biologydictionary.net/organ-system/

- Vital Signs: https://www.hopkinsmedicine.org/health/conditions-and-diseases/vital-signs-body-temperature-pulse-rate-respiration-rate-blood-pressure

- The 10-Point Pain Scale: https://www.affirmhealth.com/blog/pain-scales-from-faces-to-numbers-and-everywhere-in-between

Various Information Security Models

- The Investigation Process: https://chrissanders.org/2016/05/how-analysts-approach-investigations/

- Diamond Model of Intrusion Analysis: http://www.activeresponse.org/wp-content/uploads/2013/07/diamond.pdf

- MITRE ATT&CK: https://attack.mitre.org/

- Defense in Depth: https://www.us-cert.gov/bsi/articles/knowledge/principles/defense-in-depth

- Pyramid of Pain: http://detect-respond.blogspot.com/2013/03/the-pyramid-of-pain.html

- Cyber Kill Chain: https://www.lockheedmartin.com/en-us/capabilities/cyber/cyber-kill-chain.html

- Evidence Intention: https://chrissanders.org/2018/10/the-role-of-evidence-intention/

- PICERL Incident Response Process: https://www.sans.org/media/score/504-incident-response-cycle.pdf

Learn More

I teach information security mental models in several of my classes. The two most popular are Investigation Theory, which teaches you how to think in the mindset of a security analyst, and Practical Threat Hunting, which teaches you how to approach threat hunting in a structured, tool-agnostic manner. You can learn about both courses on my training page here.

Have you seen the Cyber Defense Matrix? It can serve as a good mental model.

https://www.slideshare.net/sounilyu/understanding-the-security-vendor-landscape-using-the-cyber-defense-matrix-60562115

Very interesting article, making technical subject simple by analogy with human biology or botany.

Spot on. Check out Micah Endsley’s work on situational awareness. Mental models speed comprehension of the current environment and projection into the future; projection feeds decision making to change the projection. She demonstrated how training builds mental models to speed decision making, the importance of solid models to make efficient use of working memory, shared mental models for team situational awareness and much, much more.

Great article, and very much agree. I see the same deficiencies and structural weaknesses. As someone else said a little while ago, we’re still in the ‘pre-Galilean’ phase of what may one day become a science or at least a formal discipline…

Great Chris, as always 🙂

We at Infosec have a tremendous effort ahead, glad to see this kind of post.